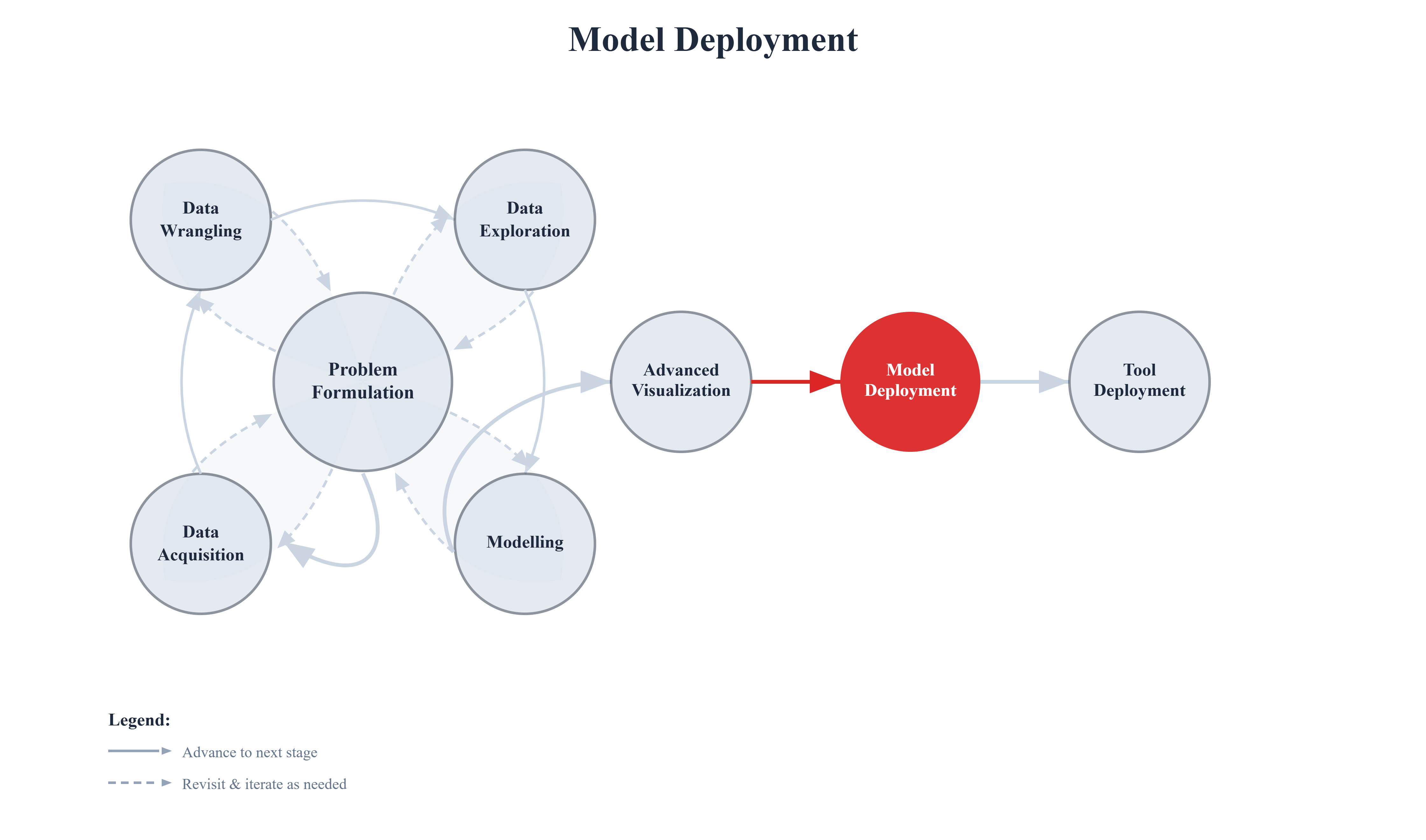

8. Model Deployment#

A model that lives only in a notebook creates no value. It does not matter how sophisticated the architecture, how carefully the features were engineered, or how high the cross-validation score—if the model never receives real-world inputs and returns real-world predictions, it is an academic exercise. Deployment is what transforms machine learning work into impact.

Deployment is the act of moving a trained model out of the experimental environment and into a system where it can receive inputs, produce predictions, and be relied upon by other people and processes. It is the bridge between data science and engineering, between a proof of concept and a product.

The Gap Between a Notebook and Production

The notebook environment is deliberately forgiving. Data is pre-loaded, dependencies are already installed, and errors are caught and fixed interactively. Production is the opposite: it is unattended, often distributed, expected to handle unexpected inputs gracefully, and measured on uptime and latency as much as accuracy.

This gap is surprisingly wide. A model trained in three hours can take weeks to deploy correctly if the team has not planned for:

Reproducibility — Can the model be re-created from scratch? Can predictions be reproduced exactly?

Portability — Does the model run on the target hardware, operating system, and Python version?

Scalability — Can the serving infrastructure handle hundreds or thousands of simultaneous requests?

Latency — Does the model return predictions fast enough for the use case (milliseconds for fraud detection, seconds for a recommendation engine)?

Maintainability — Can the model be updated, rolled back, or replaced without downtime?

Ignoring any one of these dimensions is a common reason why promising models never reach users.

The Business Cost of Not Deploying Well

Model deployment failures are not just technical inconveniences—they carry real costs. A fraud detection model that cannot scale under peak transaction load is useless at precisely the moment it is needed most. A recommendation engine that returns stale predictions because the model cannot be updated without a full redeploy slowly loses relevance. A churn prediction pipeline that works on a data scientist’s laptop but crashes on the production server wastes the months of work that went into building it.

Conversely, organizations that invest in robust deployment practices compound their returns: each model they ship teaches the team how to ship the next one faster, safer, and more reliably. Deployment infrastructure is a force multiplier for the entire data science function.

This chapter addresses these concerns directly. We start with serialization—how to save a trained model to disk and reload it later without retraining. We then address containerization—how to package a model together with all its dependencies so it runs identically on any machine. Finally, we survey the broader deployment landscape, examining where and how models are typically served in industry.

These three topics build on each other. A model must be serialized before it can be shipped. It must be containerized before it can be deployed reliably. And understanding the deployment landscape helps you choose the right strategy for your scale, latency requirements, and team.