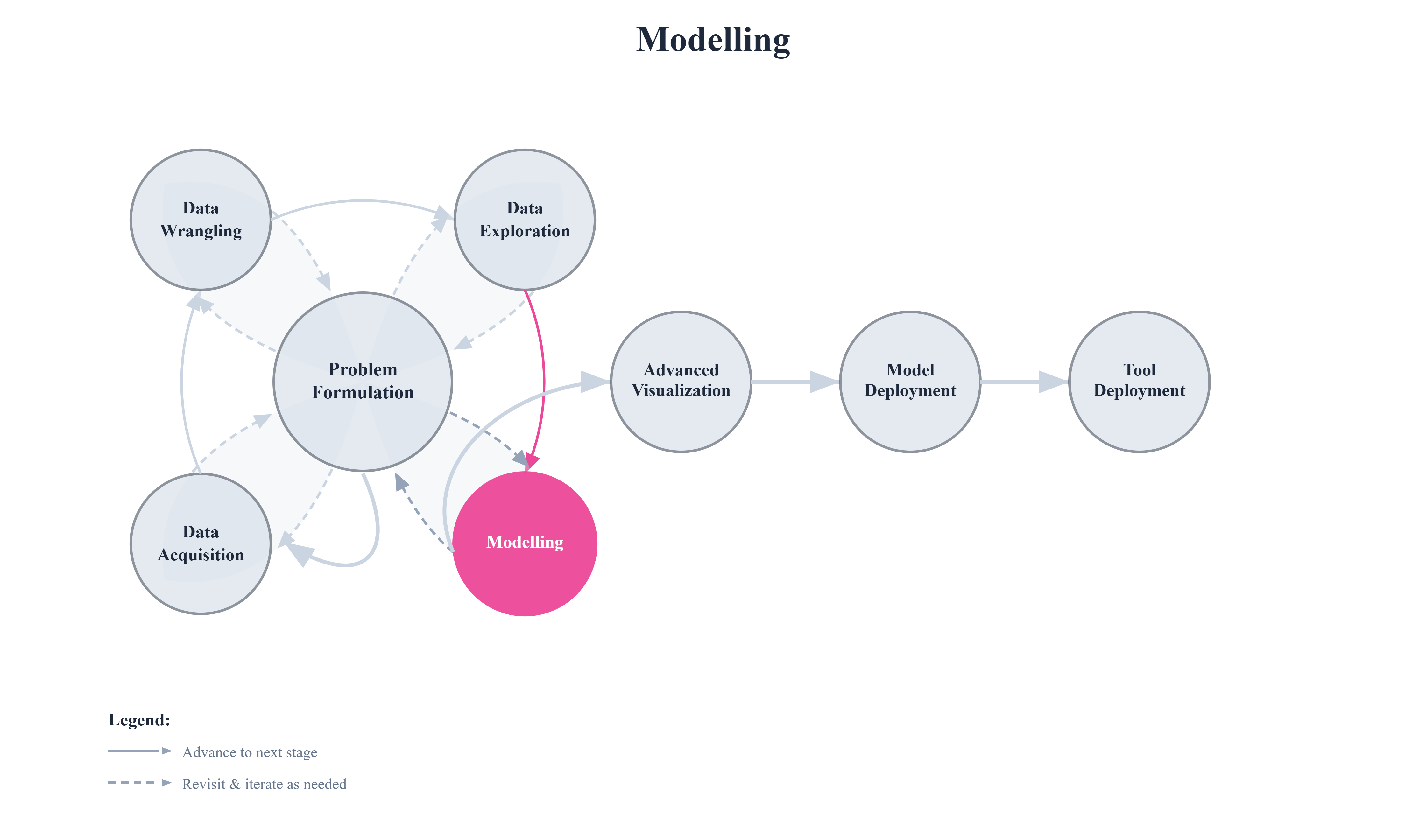

6. Modelling#

All the work done up to this point prepares us for this stage. Through data acquisition, wrangling, and exploration, we developed an understanding of what the data represents and ensured it is clean, consistent, and usable. Modelling builds directly on this foundation.

Modelling is the process of using data to learn patterns that can be generalized beyond the observed samples. A model is a mathematical or computational function that maps inputs to outputs by capturing relationships present in the data. Once learned, this function can be used to make predictions, assign labels, identify structure, or detect unusual behavior in new or unseen data.

Rather than memorizing individual data points, models aim to capture the underlying structure of the data. In practice, this often means approximating the data generating process or learning decision boundaries that separate different outcomes. The quality of a model depends not only on the algorithm used, but also on how well the data represents the real world and how carefully the modelling process is designed.

In this chapter, we begin by building intuition for what it means to model data. We then explore core modelling concepts such as training and evaluation splits, model complexity, underfitting and overfitting, and regularization. These ideas form the backbone of all modern machine learning methods. From there, we study commonly used supervised and unsupervised models, followed by strategies for evaluating their performance.

By the end of this chapter, you should understand not just how to apply models, but why they behave the way they do and how modelling decisions affect performance and generalization.