Academic Highlights from ICDM25

In this post, I review some of the most interesting works I saw presented at ICDM25. Each day of the event has a heading below. For each day, I summarize the works and presentations I found most interesting. A more exhaustive list of the papers accepted to ICDM25 and the full conference schedule can be found on the conference website.

Wednesday, November 12

The flight landed without incident in D.C. around 11PM on Veteran’s Day, and I was in my hotel, the West End Tapestry by Hilton by midnight. November 12th, it was my pleasure to meander down L Street toward 16th Ave. and arrive at the conference venue, the Captial Hilton, whose façade is shown above. The day began right away, with the ICDM workshop sessions frontloaded before the main conference proceedings beginning on Wednesday November 13th.

First Workshop on Grounding Documents with Reasoning, Agents, Retrieval, and Attribution (RARA)

As I struggled to connect to the conference’s complimentary Wi-Fi, I attended the First Workshop on Grounding Documents with Reasoning, Agents, Retrieval, and Attribution (RARA).

Retrieval-Augmented Reasoning - Hamed Zamani of UMass@Amherst

I had just collected the thoughts, sitting in the small conference room just a few feet from the podium, when the presentation on "Retrieval-Augmented Reasoning" by Hamed Zamani of UMass@Amerst started. He introduced the topic and told everyone to read his previous paper Retrieval Enhanced Machine Learning. He then summarized the generic framework for REML. Prediction models interact (albeit indirectly) with a retrieval model. Certain authors train a multistep reasoning model to interact with a search engine (Search-R1). The Search-R1 models learn in two phases, increasing the reward function first without resubmitting its queries and then second resubmitting the query and reaping the reward of even greater reward function values. In inference, Search-R1 model thinks, formulates a search query an retrieves information from the search engine that answers the latter.

Other authors explore REasoning-Enhanced Self-Training (REST). REST entails a type of self-attention where the reward of a previous iteration of the LLM reasoning model is subject to the reward function for the previous iteration. Others still explore "Pathways of Thought" or "Multi-Directional 'Thinking'". Multiple paths of thought heterodyne to a response and a centralizing final model synthesizes the pathway prediction models' thoughts into a final response. The discussion then moved on to retrieval, the consequences of the Robach Theorem, which implies that linear document ranking functions cannot be optimized, and the exploration of an approach that works around the consequences of that result.

5th IEEE International Workshop on Multimodal AI (MMAI)

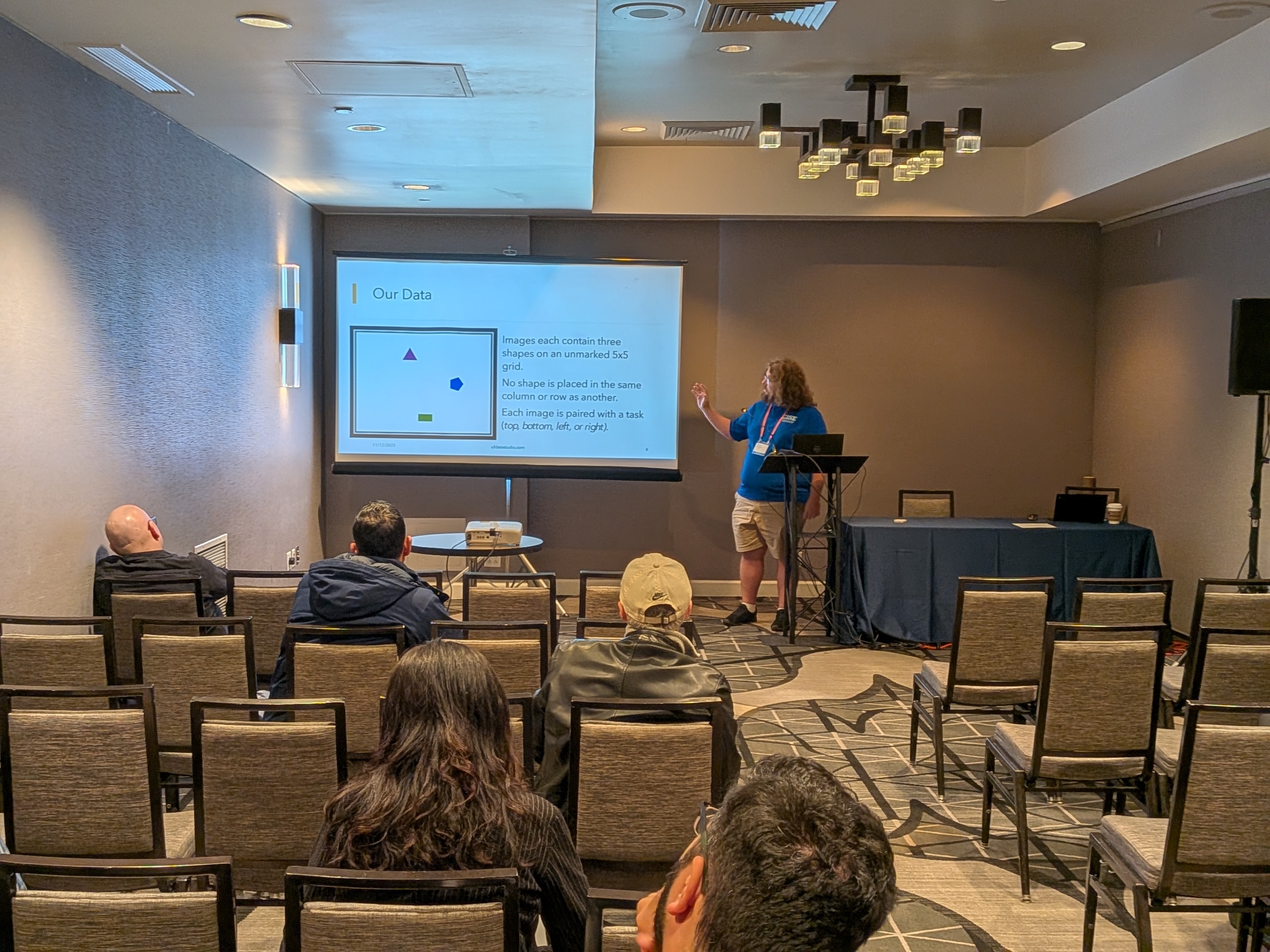

I presented at the 5th IEEE International Workshop on Multimodal AI (MMAI), and I was proud to see my name in the schedule for a workshop as I went into the room after lunch that day. The workshop began at 1:30PM, but the poster spotlight presentations, and the accompanying poster session, were not scheduled until the end of the workshop. For a couple hours, interrupted by a short coffee break at 2:30, I was sitting in the South America A conference room, listening to the other presentations and mentally rehearsing my presentation. Here are a couple highlights from other speakers in the workshop

Smart Vision-Language Reasoners – Lucas Roberts, Independent

Inspired by shortcomings in the focuses of submissions to the first NuerIPS workshop on Math, Roberts and Roberts (2025) combine Frozen Dino and SigLip to encode image and text. Cross attention for image and query text is applied, and QV-fusion concatenates all outputs with two additional hidden layers and GeLU. This architecture outperforms previous SOTA approaches to MathVQA and stands up against an ablation study. Lucas Roberts, who presented the work, says that key takeaways include considering when image or text input modalities are appropriate for MathVQA. He also recommends studying audio input for MathVQA, which has been hitherto completely ignored by the multimodal evaluation community.

A Practical Synthesis of Detecting AI-Generated Textual, Visual, and Audio Content – Lele Cao, King/Microsoft

Lele Cao presented his work at the offices of King’s, the makers of Candy Crush, AI Labs, summarizing the state of the art for detection of malicious AI-generated content in a variety of modalities: Text, Visual, and Audio. In addition to the survey of the existing literature, Cao (2025) contributes a new perspective on the robustness, adaptability, and human-in-the-loop in these malicious generation detection pipelines. He also proposes a cross-modal taxonomy for detectors, a threat matrix that maps generative AI attacks in various modalities to the effectiveness of various detectors, and actionable deployment notes.

The entries in Cao’s threat matrix gave me pause. For some Attacker capability, the Text, Image/Video, or Audio defenses are rated as either Likely Effective, Partially Effective or Context-Dependent, or Ineffective. The Attacker capabilities enumerate the different ways that an attacker could augment raw model output to bypass detectors’ efforts. While only three capability-defense pairs are rated as Ineffective, these three are trivial. They are the ratings the provenance standard detections against unmarked models and model switching. Only for the “zero-effort (raw model output)” capability, are models consistently “Likely Effective.” The vast majority of entries are Partially Effective, and the only Likely Effective ratings outside the zero-effort capability are for provenance standard detection methods, which rely on the active cooperation of model creators.

Cao’s findings, summarized through the threat matrix, inspire concern despite the vagueness in the given labels. With any effort, it seems, an attacker can render detection methods significantly less likely to detect malicious AI-generated content in various modalities in many contexts. Further, there is no capability-detector pair for which the detector is “Almost Certainly Effective” or some semantically equivalent label, suggesting that detection accuracy is likely not particularly high for any pair.

My Presentation

My presentation at MMAI came at the end of the workshop, immediately preceeding a coffee break and the poster session. I believe I did well for my first academic presentation, and another post summarizing the finds of that paper will appear soon.

The slides for the presentation will also be available soon.

Thursday, November 13

Keynote: Why Networks Matter: Embracing Biological Complexity - John Quackenbush, Harvard

The Problem

Quackenbush is chair of the biostatistic department at Harvard and contributed to the Human Genome Project which reacted its pinnacle in Feb. 2001 with the completion of a draft of the human genome. Quackenbush introduces the human genome as the "parts list" for the components of a human cell. Although different cells express different genes, the genome is the same in all of them. There are other factors than the genome that contribute to the differentiation of these cells. Unveiling the mechanism of differentiation has been the subject of more recent work.

The cost of sequencing human genomes, measured both in time and money has steeply fell since the completion of the first draft in 2001. Additionally, new source of health information (medical data) has emerged. These factors have combined to enable more detailed investigations. Whereas it was first thought that the genome sequencing project would have deep impacts for diagnostic and preventative medicine, in practice attempts to apply the genome and other data sources to those problems have hitherto failed. Quackenbush points to IBM's Watson as a famous example of computational medicine's failure to leverage data in this way.

Quackenbush introduces the so-called "central dogma" of gene regulation. DNA encodes RNA that tells proteins how to fold. Proteins tell cells what to do, and it must be the role of some "activators" or "suppressors" to enable or disable expression of specific genes in differentiated cells. Analyzing protein folding itself is a computationally prohibative. Fruther, Quackenbush speaks to the complexities of gene regulation. Specifically, epigenetics, factors in a person's life that affect gene expression, can result in different phenotypes with the same genome.

AI was supposed to make many of these problems go away, but two problems prevent it from doing so. 1. Insufficient Data 2. No robust underlying model and no ground truth.

Further, the training objectives for AI systems are inherently flawed. Data Shift and Underspecification plague biomedical AI applications. This problem is not new. Wolpert et al. (1997) show that No Free Lunch (NFL) theorems exist for optimization algorithms in the general case and that optimization methods that claim better results must place restrictions on the character of the data to work around such NFL theorems. Quackenbush argues that we have been doing the same thing in AI for medicine.

NetZoo

Moving forward to his contribution, Quackenbush et al. have produced a collection called netZoo of medical data science tools that are named after various animals. This menagerie began with the PANDA (Glass et al., 2013) and has expanded ever since.

PANDA models gene regulation. Whereas previous approaches had built a matrix wherein each gene could regulate every other gene (a square 25000x25000 matrix), PANDA takes advantage of transcription factors, which are less numerous and models how they each regulate a group of genes at once to reduce the size of the model to a 2500x1600 matrix. The overall size reduction measured in weights is from 625M to just 4M.

Although revolutionary, PANDA's performance gains come from aggregation and, Quackenbush cautions, educated assumptions about what factors regulate the expression of what genes. The next model, LIONESS (Kuijjer et al., 2019), was designed to go from the population aggregate level to the individual. It works by first creating a population aggregate and then subtracting a subset of the population to derive an estimate of the subset's network and the network of its compliment. This enables not only the estimation of individual gene expression networks but the estimations of networks for larger subsets drawn on any phenotypical basis. This was applied to numerous gene regulation problems by dividing the gene regulation network along various axes.

Next, Quackenbush and his collaborators asked about the regulatory effect of genes of TFs and devised EGRET (Weighill et al., 2022). Blood samples were taken from 119 Yoruba individuals and the red blood cells were reverted to stem cells and redifferentiated. EGRET predicted that genetically regulated TFs linked to coronary disease and Chron's would appear in MC and LCL cells respectively, and this was borne out in full by the empirical data. EGRET was then applied to various problems including LAUD.

PHOENIX (Hossain et al., 2024), a neural ODE solver, was designed to solve the ODE posed by time series data of gene expression for all 25000 genes in the human genome. GRAND produced a database of regulatory networks for gene discovery.

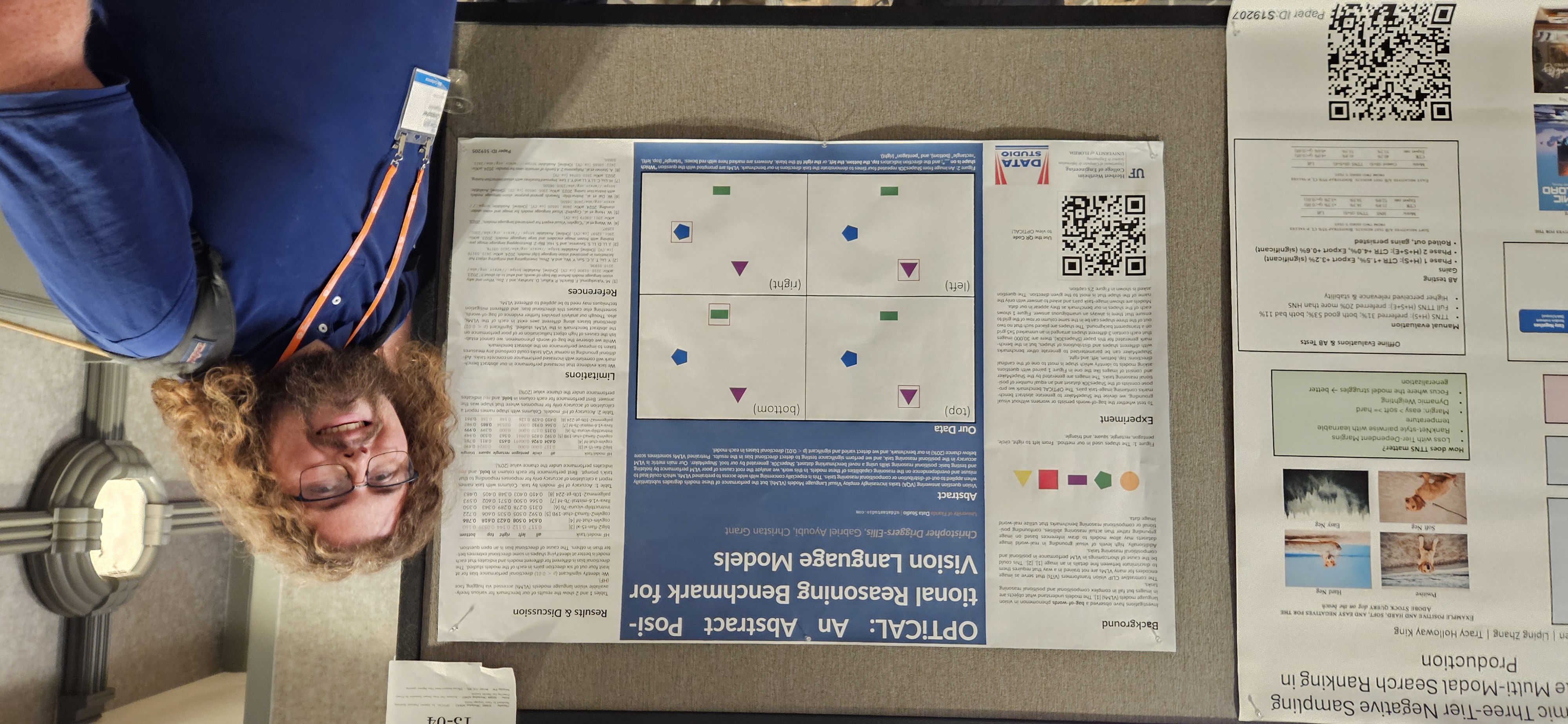

Poster Session

During the coffee breaks, it was my pleasure to present my OPTiCAL poster to a variety of researchers attending ICDM. Whereas MMAI had been opportunity to reach other researchers in the multimodal AI and VLM research space, the general poster session was an unparalleled opportunity to reach out to other researchers and show off the OPTiCAL benchmark to them.

I had such invigorating and engrossing conversations with the other researchers at this poster session that I did not notice that the first coffee break was over until half an hour after the fact and rushed to the next item on my agenda for Thursday: SABID

SABID2025 - Workshop on Solar & Stellar Astronomy Big Data

Solar and Stellar Astronomy, the study of stars and of the sun in particular with optical instruments is an important subfield of astronomy. I was reminded of this during the journey to Washington, DC by the news that intense solar flares on the other side of the world, literally, were driving strong aurora the night of my flight and that there would be a chance of seeing such auroras as far south as DC the night I arrived.

I saw no such aurora, but they were on my mind as I sat down for talks at the Workshop on Solar & Stellar Astronomy Big Data (SABID) around 11:15. What follows in this section is a summary of the presentations that I saw there.

Out of Sample Validation of MagNet

The talk began with a summary of how solar activity affects human technology. Not only are satellites over the Earth liable to feel the effects of solar wind, but the Perseverance rover on the surface of Mars is bombarded by strong solar wind thanks to the planets lacking magnetic field.

The author explained that Transverse vector fields are critical to understanding these disruptive events whereas our data for such events are incomplete. This is where machine learning comes in to fill the gap between real data and the data required. Magnet is trained and validated on HMI/SDO and MDI/SOHO magnetic data from 2010. The model was validated on OOS data new data were accessed from JSOC, BBSO and KSO. These data were collected with a different resolution and alignment than the training data and had to be preprocessed significantly to arrive at something on which the authors' MagNet could perform coherently.

Satisfactory correlation coefficients between ground truth and AI generated magnetographic data were achieved, and the presentation featured stunningly similar AI generated imagery to the ground truths. Though correlation coefficients of 0.94 and 0.78 were achieved, the authors ask why they fell short. They pin some of the problem on limitations in the precision of optical instruments, including the difference between ground-based and space astronomy.

Uncertainty-Aware Solar Flare REgression

This talk began with a recapitulation of solar phenomenon. In particular, the author elucidated how solar flares are typically observed in space using X-ray light astronomy, which cannot be performed from the ground thanks to the interference of Earth's atmosphere.

The author introduced three methods for quantifying uncertainty in solar flare observation: 1. Conformal Prediction (CP) 2. Quantile Regression (QR) 3. Conformalized quantile regression (CQR)

Each uncertainty quantification technique was placed in a greater architecture during an experiment. The primary metrics were interval length and % coverage of real observations with the uncertainty interval. CP showed better performance than the other methods but features a constant interval length that limits the interpretability of the results garnered through that method.

References

- Roberts, D. Roberts, L. Smart vision-language reasoners. MMAI@ICDM25. 2025

- Cao, L. A Practical Synthesis of Detecting AI-Generated Textual, Visual, and Audio Content. MMAI@ICDM25. 2025

- Wolpert D.H. et al. No free lunch theorems for optimization. IEEE Transactions on Evolutionary Computation 1(1). 1997

- Glass, K. et al. Passing Messages between Biological Networks to Refine Predicted Interactions. PLoS ONE 8(5). 2013

- Kuijjer, M.L. et al. Estimating Sample-Specific Regulatory Networks. iScience 14. 2019

- Weighill, D. et al. Predicting genotype-specific gene regulatory networks. Genome Research. 2022

- Hossain, I. et al. Biologically informed NeuralODEs for genome-wide regulatory dynamics. Genome Biology. 2024